Beaglebone Black timer capture driver

More info on: Hardware, Kernel Driver software

The Beaglebone Black has a hardware timer capture. This means it can save the couter value at the time an input edge happens. Since it has a 24MHz clock, the timer has a precision of 41.7ns. Compared to the usual method of taking timestamps with a GPIO interrupt, it's much more precise. With this driver, we can measure the amount of latency and jitter added by the interrupt handler directly.

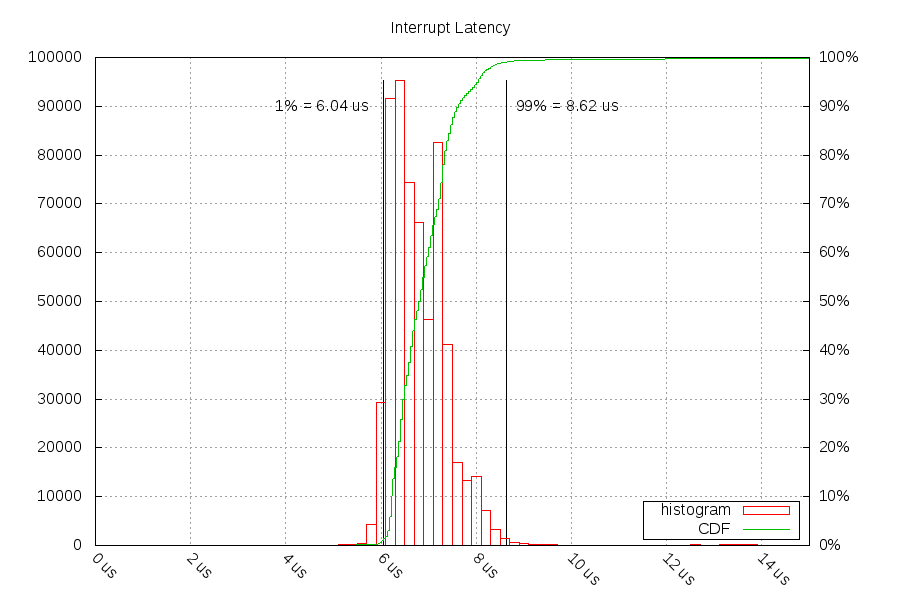

Below is the amount of time that has passed from the PPS event happening and the Linux kernel running the interrupt handler. This should be roughly the same amount of time between the pps-gmtimer and pps-gpio. The red boxes are histogram bins of latencies, and the green line is a cumulative distribution.

98% of the time, the interrupt gets called between 6.04 microseconds and 8.62 microseconds after the event. The mean is 6.92 microseconds and the stddev is 1.72 microseconds.

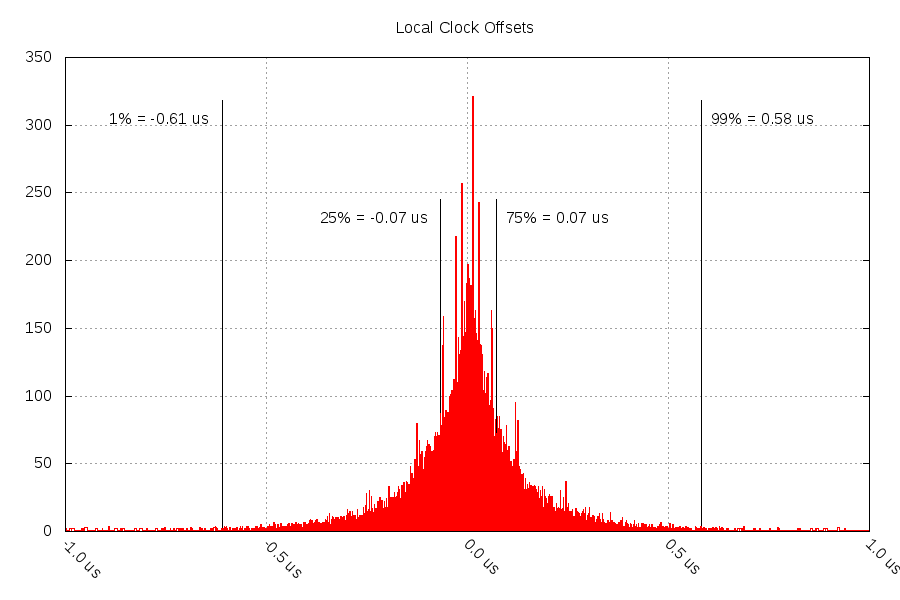

This affects the timestamp offsets sent to the NTP software. Below is a histogram graph of 6.5 days worth of timestamps from the pps-gpio module. The black lines are just percentile markers, and not part of the data.

The NTP software filters the timestamps it receives. I had it setup to take the best every 16 seconds, and then it uses linear regression to estimate the local clock's error. This reduced the clock offset to +/- 0.07 microseconds 50% of the time, and +/- 0.61 microseconds 98% of the time.

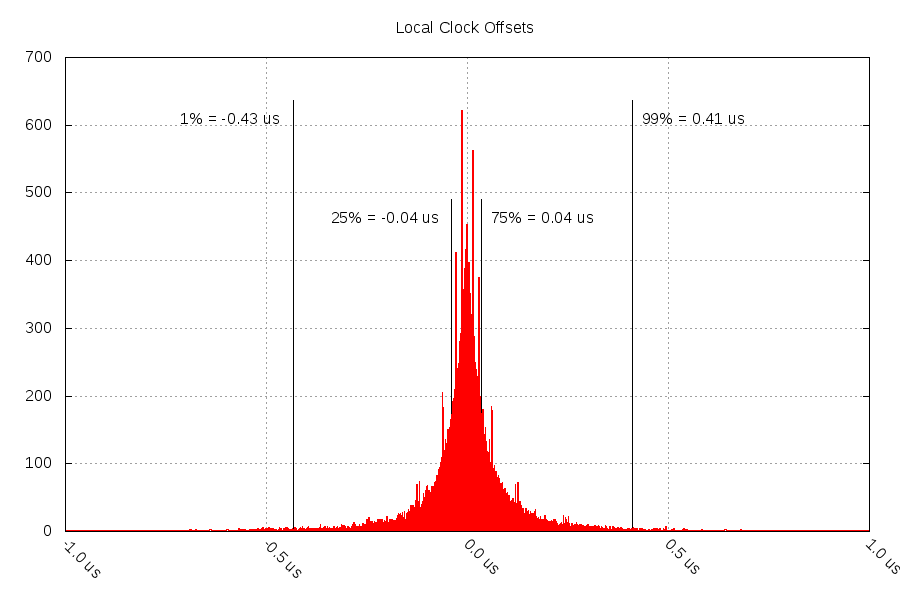

Compare that to data from 8 days with the pps-gmtimer module:

Noticeably less noise in this graph. 50% of the time, it's within +/- 0.04 microseconds (maybe there's a relationship with the clock precision?), and 98% of the time it's within +/- 0.43 microseconds.

Both graphs have odd spikes at +/-14 ns, +/-29ns, +/-59ns, and +/-62ns. I do not know where this noise is coming from - enviornment, software, or hardware.

Questions? Comments? Contact information