ch32v307 dev board, part 4

Next, I will cover connecting a GPS module to a NTP server over USB.

This is part of a series on the ch32v307 dev board

Previous project

I previously setup PPS over USB using a USB Full-Speed (12Mbit) stm32f103 device. One of the limitations of that device is USB Full-Speed devices are polled by the host every 1ms at the fastest. I measured when the USB device was polled and sent a follow-up message so the timestamp could be adjusted. The clock offset after the timestamp adjusting was +/-5 microseconds (μs).

This project will be using a USB High-Speed (480Mbit) device, which is polled at 125μs at the fastest. So at the very least, I would expect the polling delay to reduce.

Preparation

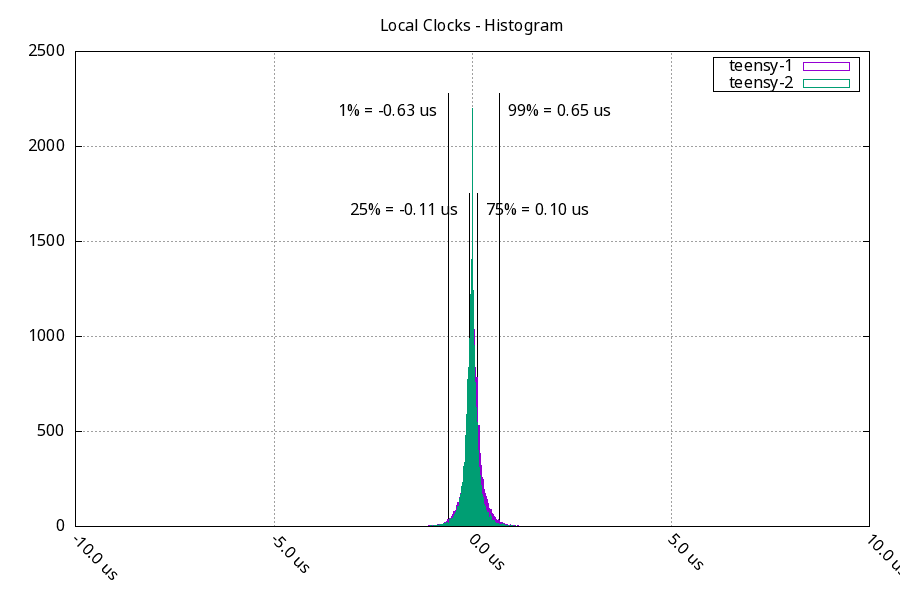

To start the measurement, I took a stratum 2 NTP server that gets its time from two local stratum 1 NTP servers. This is using hardware timestamps on both the client and server, along with NTP interleaved mode. These are the standards to compare the results against.

These three systems agreed on their time within +/- 650 nanoseconds 98% of the time with this configuration.

Testing

A GPS was connected to the ch32v307 and the ch32v307 was connected to a server via USB. The ch32v307 changes the GPS PPS into carrier detect messages, and timestamps by the Linux kernel are configured via the ppsldisc command. The ch32v307 measures the difference in time between when the PPS happened and when the carrier detect message was received by the USB host, and sends that as a follow-up message. I've written a python program that listens for that follow-up message, adjusts the PPS timestamp, and sends that timestamp into chrony via a unix socket.

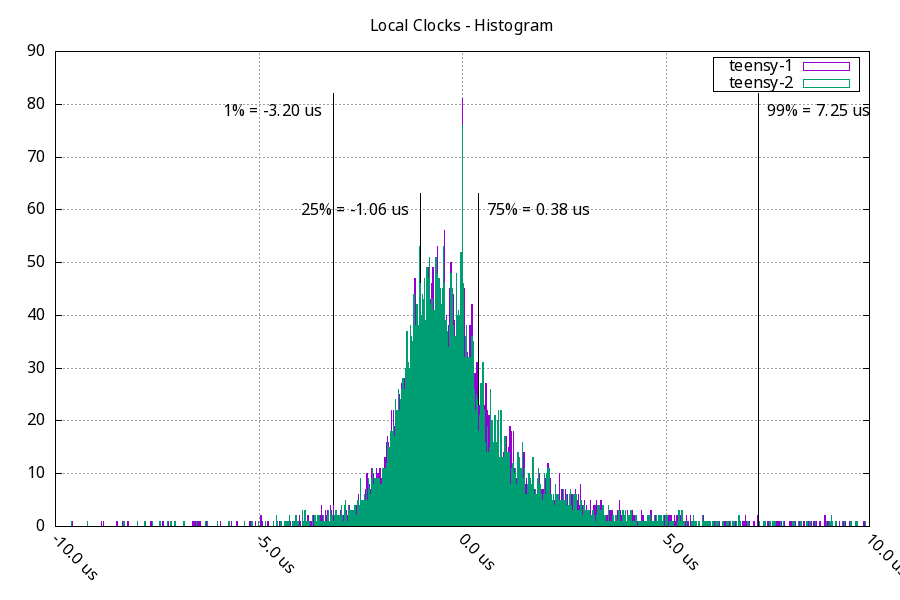

After the server starting syncing against the PPS, its clock was farther out of sync from the network clocks (teensy-1 and teensy-2).

98% of the time, the clocks were within -3.2 μs, +7.3 μs. This isn't as good as a local network source with hardware timestamps, but it is better than using a time source over a wan connection.

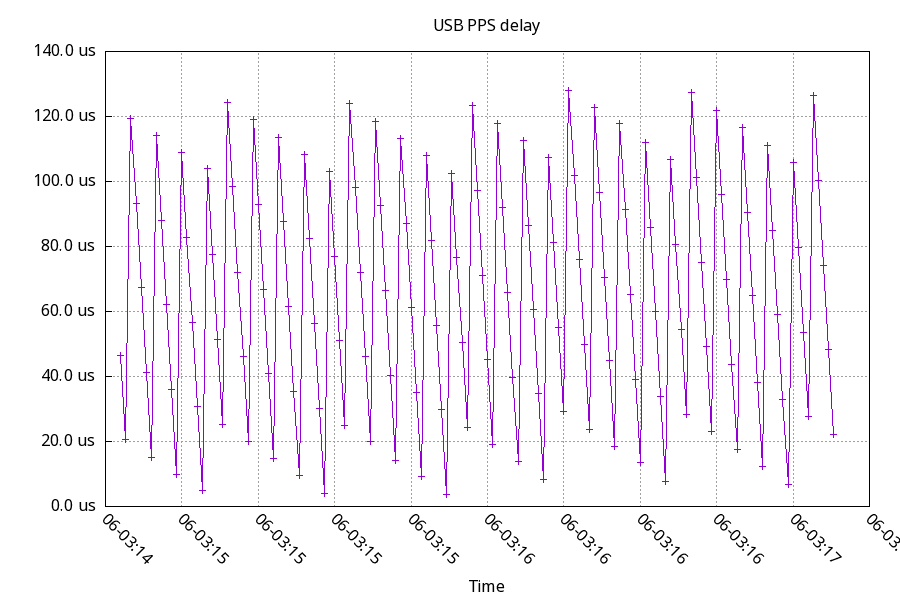

USB Delay

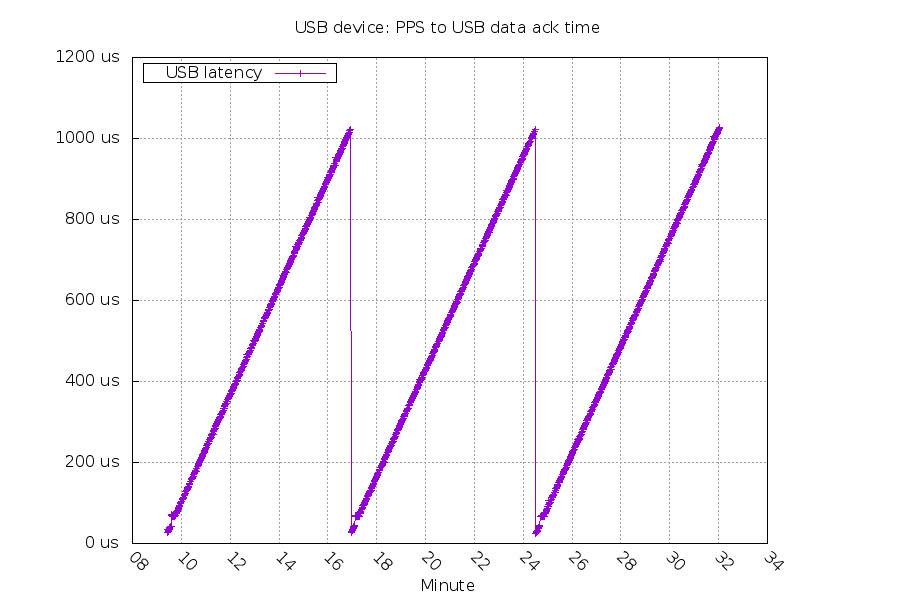

With the measurement of time between when the PPS happened and when the USB host picked up the carrier detect message, we can compare High Speed vs Full Speed USB delay. First up, the delay for High speed:

The expected value is between 0us and 125μs, plus the interrupt delay on the microcontroller. That matches what we see. The triangle shape is due to the USB Host's clock drifting in relation to the PPS message.

And then, the delay for Full speed:

The expected value is between 0μs and 1000μs, plus the interrupt delay on the microcontroller. This is also a match.

USB interrupt coalescing

The USB host for these tests has a "Intel Corporation 9 Series Chipset Family USB xHCI Controller". The technical specs for xhci controllers mentions "Interrupt Moderation". It tries to combine multiple USB events together into one interrupt by delaying for a configurable amount of time. By default, Linux is configured for a 40μs delay. I experimented with changing that to 4μs with the patch below.

diff --git a/drivers/usb/host/xhci-pci.c b/drivers/usb/host/xhci-pci.c

index b9ae5c2a2527..ea04d67c3263 100644

--- a/drivers/usb/host/xhci-pci.c

+++ b/drivers/usb/host/xhci-pci.c

@@ -599,7 +599,7 @@ static int xhci_pci_setup(struct usb_hcd *hcd)

pci_read_config_byte(pdev, XHCI_SBRN_OFFSET, &xhci->sbrn);

/* imod_interval is the interrupt moderation value in nanoseconds. */

- xhci->imod_interval = 40000;

+ xhci->imod_interval = 4000;

retval = xhci_gen_setup(hcd, xhci_pci_quirks);

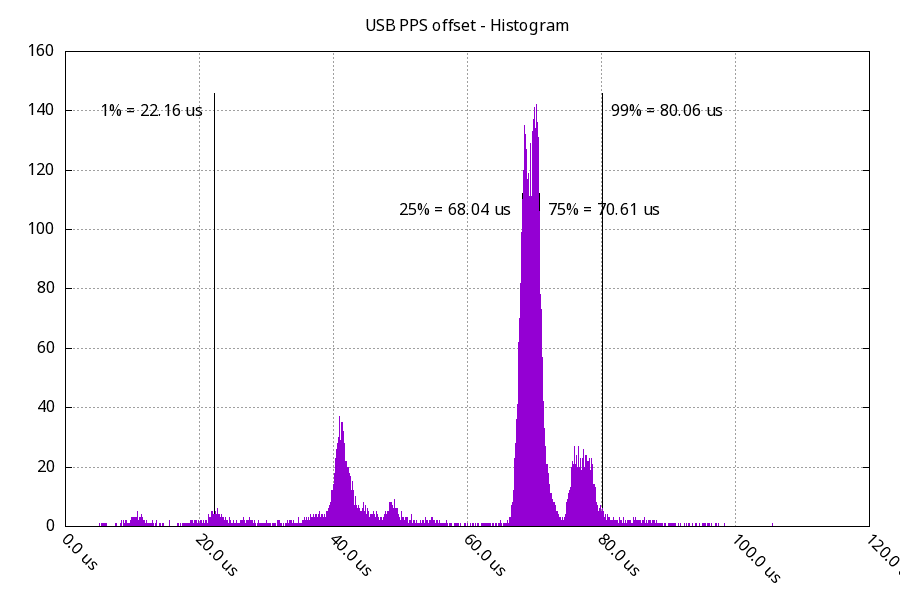

if (retval)The system was configured to get its time from the local stratum 1 NTP servers. Below is a graph of the offset of the USB PPS before this change compared to the NTP server time. Ideally, it would have an offset of 0μs with a very small timespan between 1st percentile and 99th percentile.

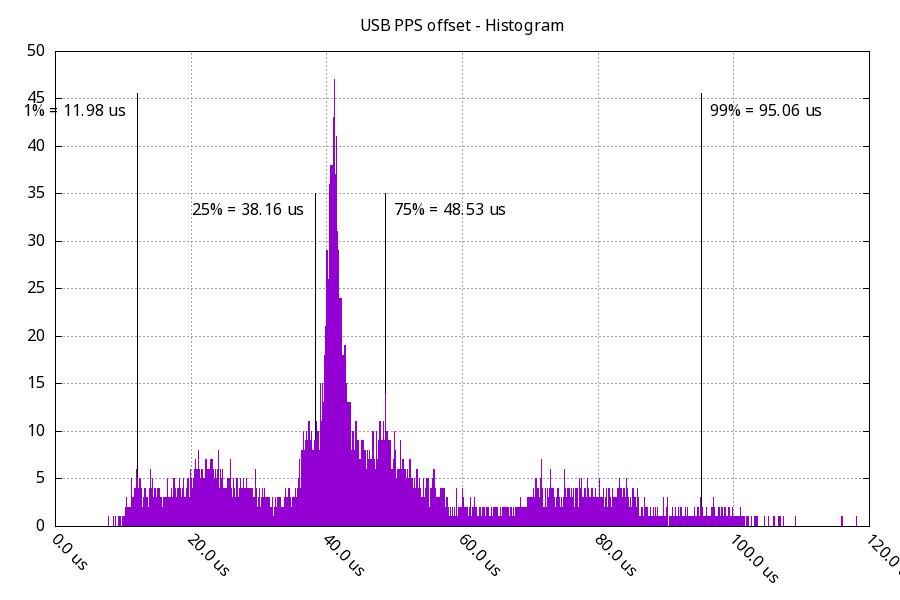

And then, after the change (graph below) I would expect the offset to get closer to 0μs.

This shifted the offset closer to 0μs, but by less that I would have expected. (about 30μs vs 36μs for the 25th percentile). There's some strange distributions in these timings, which I want to do some more experimenting on. I don't understand what is taking 38μs~48μs, I would have expected this timing to be much closer to 10μs~30μs.

I'm not sure this change was worth it. It lowered the offset but raised the jitter. The offset is easy to correct, and jitter is not.

Additional Links

- source: https://github.com/ddrown/usbhs-cdc/tree/pps-input-capture

- CDC PPS kernel patch (in the Linux kernel 6.6 and later) https://git.kernel.org/pub/scm/linux/kernel/git/gregkh/usb.git/commit/?h=usb-next&id=3b563b901eefb47ce27a9897dea2739abe70ee5a

Questions? Comments? Contact information